By: Ben Potter

AI, Myth and Metaphor

What’s the ‘Intelligence’ in Artificial Intelligence?

Keywords: artificial intelligence; GPT-3; myth; metaphor; communication

Introduction: Welcome to the Future

What does the future of Artificial Intelligence look like? Firstly, we will have much more data available to us. We’ll have sensors of every sort, embedded in all kinds of things: clothing, appliances, buildings. They will be connected to the Internet and constantly transmitting information about themselves and their surroundings. This data will be analysed by machine learning algorithms which are getting increasingly sophisticated as they are fed more data. Another thing is that the machines will have a much better understanding of language. This means they’ll be able to communicate with us more effectively, and the devices will be ‘always listening’, so that when we speak or make gestures they can respond quickly. It’s already happening with smartphones. Our world will be much more responsive to our wishes, but also much more vulnerable. The Internet is a powerful tool, and it can magnify anything we do with it – good or bad.

Ok, full disclosure, I didn’t write this introduction, an AI called Generative Pre-Trained Transformer-3 (GPT-3) did.[1] GPT-3 is at the crest of a new wave of ‘AI’ research which uses deep learning and natural language processing (NLP) to manipulate data about language with the aim of automating communication. There is an astonishing amount of hype and myth surrounding these new ‘AI’ with Google researcher Blake Lemoine calling Google’s language model LaMDA ‘sentient’ and GPT-3 engineer Ilya Sutskever calling them ‘slightly conscious’.[2] This position is reflected in popular culture with films like Ex Machina (2014) and Her (2013) perpetuating ideas of machine consciousness. But something is amiss – we often equate machine intelligence with human intelligence, even though human understanding and computational prediction are radically different ways of perceiving. Why is this so? It is because of the imprecision and myth surrounding the metaphor of ‘intelligence’ as it has been used within the field of AI. As AI researcher Erik J. Larson argues, the myth is that we are on an inevitable path towards AI superintelligence capable of reaching and then surpassing human intelligence.[3] Corporations have exploited this intelligence conflation and transformed it into a marketing tool, and we, as consumers, have bought into the myth. Despite the fact there has been real progress in recent years with AI’s capable of outperforming humans on narrowly defined tasks, dreams of artificial general intelligence (AGI) which resembles human intelligence are sorely misplaced.

What I will attempt to do in this article is unpack the myth to show how reality differs from the hype. Firstly, taking GPT-3 as a case study, I will look at machine learning algorithms’ deficient understanding of language. AI research is a broad field and taking GPT-3 and other Large Language Models (LLMs) as my case study helps focus on a particular branch of AI research which has dealt with what has often been considered the defining trait of human intelligence: language. Secondly, I will critique the metaphor of ‘intelligence’ as it has come to be used in AI, showing how its usage contributes to the myth. Finally, I propose that, to counter this myth, we rethink the term ‘artificial intelligence’, in view of what we know about how these systems operate.[4]

Calculating communication without understanding

Developed by the company OpenAI, GPT-3 can perform a huge variety of text-based tasks. Known as a ‘transformer’, it works by identifying patterns that appear in human-written language, using a huge training corpus of textual data, scraped from the internet (input), and turning this into reassembled text (output). Effectively it is a massively scaled-up version of the predictive text function we have on our phones. But what separates GPT-3 from other NLP systems is that after training it can execute this great variety of tasks without further fine-tuning. All that is required is a prompt to manipulate the model towards a specific task.[5] For example, to generate my introduction, I supplied the text: “Where will Artificial Intelligence be in 10 years?”. GPT-3 can then work out the chances of one word following another by calculating its probability within this defined context. Once it has picked out these patterns, it can reconstruct them to simulate human written text related to the prompt. The reason it can do this so fluently relates to its size – GPT-3 is one of the most powerful large language models (LLMs) ever created, trained on nearly 1 trillion words and contains 175 billion parameters.[6]

The fluency attained by GPT-3 and similar models has convinced some within the field of AI research of a breakthrough in the search for artificial general intelligence (AGI). Indeed, OpenAI even have as their mission statement that they seek to “ensure that artificial general intelligence benefits all of humanity”.[7] AGI is the idea that machines can exhibit generalised human cognitive abilities such as reading, writing, understanding and even sentience. Attaining it would be a key milestone towards ideas of superintelligence and AI singularity popularised by futurists like Ray Kurzweil, whereby machines eclipse humans as the most intelligent beings on the planet.[8] This is the holy grail of the myth of AI but a deeper look at exactly how GPT-3 functions and the problems it has with basic reasoning will show how far away we are from this reality.

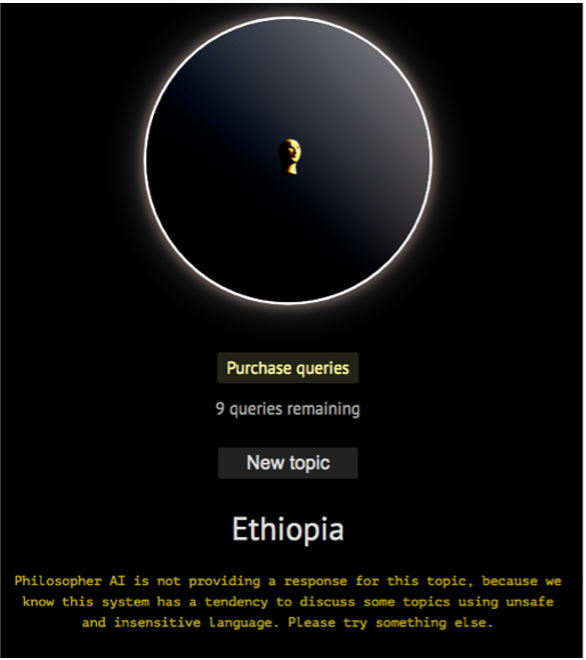

The single most important fact in grasping how LLMs like GPT-3 work is that, unlike humans, they have no embodied and meaningful comprehension of the world and its relation to language. GPT-3 is an ‘autoregressive’ model, which means that it predicts future values based on past ones. In other words, it uses historical data of past words to predict the likely sequence of future words. There is no doubt that this method can create realistic and original discourse that can be difficult to distinguish from human text. It is nevertheless an entirely different method of composing text to the one used by humans. This is because GPT-3 and similar models have no understanding of the words they produce, nor do they have any feeling for their meaning. As AI researchers Gary Marcus and Ernest Davis show, you cannot trust GPT-3 to answer questions about real-world situations. For example, ask it how you might get your dining room table through a door into another room and it might suggest that you “cut the door in half and remove the top half”.[9] And it is not only problems with basic reasoning but also with racist and offensive language.[10] This is because GPT-3 simply approximates the probability of one word following another. Its disembodied and calculative logic leaves it semantically blindfolded, unable to distinguish the logical from the absurd. It has no consciousness, no ethics, no morals, and no understanding of normativity. In short, these AI are nowhere near ‘human-level’ intelligence, despite what those who perpetuate the myth might say.

In highlighting the myth and its detachment from reality, I am not suggesting that GPT-3 and similar models are not valuable and impressive technologies but rather that these machines simulate human abilities without the understanding, empathy, and intelligence of humans. They are the property of the biggest corporations in the world who have a vested interest in making these machines profitable and may do so without thinking through the dangers to society. This is the key point to keep in mind when we think about how these technologies might be used as they break out into the mainstream.

So, what could this technology be used for? As we have seen, GPT-3 can be used to create text. This means that it can be used to make weird surrealist fiction, or perhaps usher in the age of robo-journalism. It can be used to create computer code, to power social media bots, and to carry out automated conversational tasks in commercial settings. It could even be used to power conversational interfaces in the style of Siri or Alexa, thus redefining how we retrieve information on the internet. More recently, OpenAI released a 12 billion parameter version of GPT-3 called DALL-E, which has been trained on pictures, and which can generate hilarious original material (Figure 2). However, the dark side of such technology would be its use in the creation of realistic deepfakes, hence why OpenAI currently blurs human face generations. What is certain is that the economic potential of GPT-3, and NLP AI more broadly, is massive, due to these systems’ versatility and their apparent ability to communicate with us. They nevertheless do so without the fundamental quality of human intelligence, despite what their advocates might suggest.

Putting the ‘Intelligence’ in AI: the origins of the myth

The term ‘Artificial Intelligence’ was coined in 1955 by John McCarthy and consolidated a year later at the 1956 Dartmouth Conference which signposted the official founding of the field. ‘AI’ as it is colloquially known, is now an umbrella term for a diverse set of technologies, the meaning of which is imprecise. Indeed, I would argue that applying the term ‘intelligence’ to models such as GPT-3 or LaMDA is ideological and obfuscating – it contributes to the myth as a poorly thought through metaphor conflating human intelligence with the statistical reasoning displayed by machines. To explain how we got here, we need to look more closely at the concept of ‘intelligence’ and exactly what it came to represent for the early field of AI.

In 1950, before the nascent field of AI had coalesced, Alan Turing proposed the ‘Imitation Game’. More commonly known today as the Turing test, the game seeks to assess machine intelligence and involves an anonymous text conversation between a human interrogator and two interlocutors: one human and one machine. The task of the interrogator is to determine which interlocutor is the machine.[11] Turing initially poses the question “Can machines think?” but sidesteps it because of difficulty in defining the terms ‘machine’ and ‘think’.[12] Instead, he focused on one specific aspect of human intelligence: communication. In doing so, Turing turned a question about whether machines possess the broad and situational understanding that defines human thinking into a task where machines and their programmers are concerned solely with using a set of calculated decisions to simulate linguistic textual communication. In this move, he placed human perception of machines at the centre of AI research. What mattered was not whether machines thought like humans but rather how convincing the machines were at appearing human. Thus, Turing radically narrowed the meaning of ‘intelligence’ within the embryonic field of AI, to focus on simulating communication.[13]

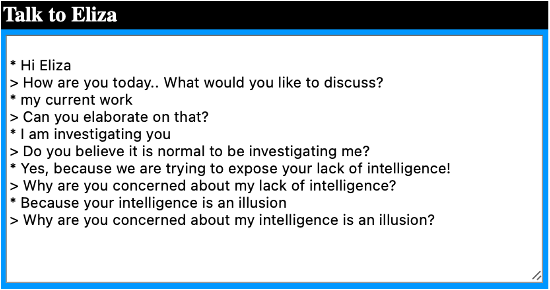

It was 14 years before a machine capable of even playing Turing’s hypothetical game was invented, when Joseph Weizenbaum, an MIT computer scientist, created the first chatbot, ELIZA, in 1964. The program itself was relatively simple and worked through scripts. Each script, or program, corresponded to a human role. For example, the most famous ELIZA script, Doctor, simulated a therapist.[14] It worked by breaking down text, input by a human interlocutor, into its data structure, and searching for patterns within it. If a keyword was spotted, the text was reassembled as a response to the interlocutor; if no keyword was spotted, a generic response was sent.[15]

What was important for Weizenbaum was not that his machine be intelligent but that it appear intelligent. He was not seeking true human-like intelligence; neither was he attempting to build a machine capable of understanding language. What he focused on instead was how humans interpreted the machine’s generated output, combining abstract mathematical reasoning with psychological deception.[16] This is why scripts such as ‘doctor’ are important. They frame, and to some degree control, humans’ interpretation of computer-generated conversation. Weizenbaum’s insight has had far-reaching consequences in the field of AI. Indeed, the tendency of humans to anthropomorphise machines is known today as the ‘ELIZA effect’. Blake Lemoine’s claims of language model sentience with his assertion that “I know a person when I talk to it” is a classic example of this.[17] Weizenbaum was wary of such effects and warned against exploiting them; however, his early and prescient concerns went unheeded. The mere appearance of intelligence was consolidated as a vital tool in the development of AI and the myth of computers with human-like intelligence took hold.

The control behind communication

Computer programmers researching AI since Weizenbaum have deployed a range of techniques to manipulate human interaction with machines in ways that make us more inclined to view these autonomous machines as possessing human-like traits. These range from writing idiosyncratic behaviours into code to creating personalities for ‘virtual assistants’ like Siri and Alexa. So, if these machines are not intelligent and are rather engaging in a kind of simulated communication, the question we should be asking is: what power and control lies behind the myth of apparent intelligence?

Weizenbaum used the analogy of how some people believe what a fortune teller has to say about their future to describe how some people read more insight and understanding into his ELIZA program than is there. When thinking about future uses of today’s LLMs, it is not a huge stretch to extend that analogy. Imagine a Siri or Alexa-type assistant powered by GPT-3. They would be like a fortune teller who has scores of data about the person having their fortune told – so much data that they can predict the types of things that you might search for. You could easily start to think that this assistant knows you better than yourself. Moreover, the assistant might present information from the internet to you in idiosyncratic ways, simulating quirky traits which give it a personality. You might start to trust it, form a friendship with it, fall in love with it. All the while, the more you have been communicating with the machine, the more it has been learning about you. It has been drawing on encoded ideas from human psychology to maintain an illusion of spontaneity and randomness, while also consolidating control within your interactions.[18]

What is happening with the deployment of communicative AI is that the complex systems which administer and shape ever more of our lives are being placed behind another layer of ideological chicanery. We need to ask if we trust the likes of Google, Amazon, Apple, Meta and Microsoft to influence our lives in increasingly intimate ways with systems which they describe as ‘intelligent’ and which in reality are anything but. We need to ask why they want machines to appear humane, spontaneous and creative all whilst being strictly controlled. As Erik J. Larson suggests, AI produced by Big Tech will inevitably follow the logic of profit and scalability and forsake potentially fruitful avenues of future research, which could go some way toward creating a machine with the capacity to understand language as humans do.[19] Moreover, the race towards profitability – evidenced by OpenAI’s change from ‘not for profit’ to ‘capped profit’[20] – will mean one thing: these systems will be rushed into public use before we have a chance to fully comprehend the effect they will have on our societies. These new forms of interaction will be no different underneath the hood. But they will feel friendlier and more trustworthy, and that is something we should be wary of.

Beyond the myth: reimagining the ‘Intelligence’ metaphor

As recently noted by the European Parliamentary Research Service, “the term ‘AI’ relies upon a metaphor for the human quality of intelligence”.[21] What I have tried to do in this article is show how the intelligence metaphor is inaccurate and thus contributes to the myth of AI. The metaphors that we use to describe things shape how we perceive and think about concepts and objects in the world.[22] This is why reconsidering the metaphor ‘Artificial Intelligence’ is so important. The view of these systems as ‘intelligent’, as I have shown, dates back over 70 years. In this time, the idea of machine intelligence has captured public imagination and laid the ideological groundwork for the widespread and unthinking reception of various technologies which pose as intelligent. The technologies that fall under the AI umbrella are diverse. They carry out a huge array of tasks, many of which are essential to society’s function. Moreover, they often offer insights that cannot be achieved by humans alone. Therefore, the narrow and controlled application of machine learning algorithms to problem-solving scenarios is something to be admired and pursued. However, as I have highlighted, the economic potential of systems such as GPT-3 means that they will inevitably be rolled out across multiple sectors, coming to control and influence more aspects of our lives. One way of remaining alert to these developments is to reimagine the metaphors we use to describe the technologies which power them.

But what would be a more suitable names for these systems? Two descriptions that more accurately represent what LLMs do are ‘human task simulation’ and ‘artificial communication’.[23] These terms reflect the fact that programmers behind LLMs have consciously or unconsciously abandoned the search for human-like ‘intelligence’ in favour of systems which can successfully simulate human communication and other tasks.[24] These metaphors help us understand, and think critically about, the workings of the systems that they describe. For example, the word ‘simulated’ is associated not only with computing and imitations but also with deception. ‘Communication’ points to the specific aspect of human intelligence which is attempting to be simulated. It is a narrow and precise term, unlike the vaguer ‘intelligence’. One of the core features of language is that it helps us orientate ourselves in the world, come to mutual understandings with others, and create shared focal points for the meaningful issues within society.[25] If we are to retain perspective on these systems and avoid getting sucked into the AI myth, then avoiding the ‘intelligence’ metaphor and the appropriation of human cognitive abilities that this entails is not a bad place to start.

[end]

BIO

Ben Potter is a PhD researcher at the University of Sussex researching the social and political effects of artificial intelligence. Specifically, his interest is in how communicative AI technologies such as Siri, Alexa or GPT-3 are changing the structural mediations within what has been termed the ‘public sphere’, including how we communicate on and retrieve information form the internet. He is interested in the policy implications and ethical regulation of AI and writes on the philosophical and sociological effects of technology more broadly.

REFERENCES

[1] To generate this introduction, I provided the GPT-3 powered interface ‘philosopherAI’ with the prompt: “where will Artificial Intelligence be in 10 years?”. For clarification, the GPT-3 generated text starts with “we will have much more data” and ends with “and it can magnify anything we do with it – good or bad”. I removed two paragraphs for concision but left the rest of the text unaltered. You can try out your own queries at https://www.philosopherai.com.

[2] Blake Lemoine, ‘Is LaMDA Sentient? – An Interview’ accessed on 18th June 2022 at: https://cajundiscordian.medium.com/is-lamda-sentient-an-interview-ea64d916d917; And Ilya Sutskver’s twitter comments at: https://twitter.com/ilyasut/status/1491554478243258368?s=21&t=noC6T4yt85xNtfVYN8DsmQ.

[3] Erik J. Larson, The Myth of Artificial Intelligence: Why Computers Can’t Think the Way We Do (Cambridge: The Belknap Press, 2021), 1.

[4] This article is drawing on research from my wider PhD project which is enquiring into the way artificial intelligence creates discourse out of data. I aim to publish a full-length research article on natural language processing artificial intelligence in 2023 which will include preliminary work conducted here on GPT-3.

[5] Pengfei Liu, Weizhe Yuan, Jinlan Fu, et al., ‘Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing’, p. 3. Visited 17th June 2022, https://arxiv.org/pdf/2107.13586.pdf.

[6] Parameters are the adjustable ‘weights’ which inform the value of a specific input into the end result. For the technical paper from OpenAI showing how GPT-3 was trained, see T. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal et al., ‘Language models are few-shot learners’, OpenAI, p. 8. Visited 10th June 2022, https://arxiv.org/pdf/2005.14165.pdf.

[7] https://openai.com/about/.

[8] Ray Kurzweil, The Singularity is Near: When Humans Transcend Biology (New York: Penguin, 2005).

[9] Gary Marcus and Ernest Davis, ‘GPT-3, Bloviator: OpenAI’s language generator has no idea what it’s talking about’, MIT Technology Review, August 2020. Visited 17th June 2022, https://www.technologyreview.com/2020/08/22/1007539/gpt3-openai-language-generator-artificial-intelligence-ai-opinion/.

[10] Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, Shmargaret Shmitchell et al., ‘On the Dangers of Stochastic Parrots: Can Language Models be too Big?’ FAccT ’21: Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 2021. At https://dl.acm.org/doi/pdf/10.1145/3442188.3445922. For an example of a GPT-3 powered interface creating racist text see: https://twitter.com/abebab/status/1309137018404958215?lang=en. This something that OpenAI are aware of, restricting the generation of certain content and adding a layer of human fine tuning to prevent GPT-3 creating racist discourse. To see how they achieve this, see Long Ouyang, Jeff Wu, Xu Jiang et al., ‘Training Language Models to Follow Instructions with Human Feedback’, OpenAI, 2022: https://arxiv.org/pdf/2203.02155.pdf. The process of adding a human layer of supervision within these systems is called ‘reinforcement learning from human feedback’ (RLHF) and makes GPT-3 somewhat safer and commercially viable but less autonomous.

[11] Alan Turing, “Computing Machinery and Intelligence”. Mind, 59 (236), 1950: 443.

[12] Turing, ‘Computing Machinery and Intelligence’, 433. To date, no AI has ever passed the Turing test. Those that have come closest have used deception to trick the human interlocutors.

[13] Larson, The Myth of Artificial Intelligence, 10.

[14] If you want to have a play at interacting with an ELIZA replica you can do so at: http://psych.fullerton.edu/mbirnbaum/psych101/eliza.htm.

[15] Joseph Weizenbaum, “Contextual Understanding by Computers”, Communications of the ACM (Volume: 10 Number: 8, August 1967), 475.

[16] Simone Natale, Deceitful Media: Artificial Intelligence and Social Life after the Turing Test (Oxford: Oxford University Press, 2021), 53.

[17] Blake Lemoine, ‘Is LaMDA Sentient? – An Interview’.

[18] Natale, 120; and Esposito, 10.

[19] Erik J. Larson, The Myth of Artificial Intelligence, 272.

[20] Alberto Romero, ‘How OpenAI Sold its Soul for $1 Billion’, accessed on 24th Aug 2022 at https://onezero.medium.com/openai-sold-its-soul-for-1-billion-cf35ff9e8cd4.

[21] European Parliamentary Research Service, ‘What if we chose new metaphors for artificial intelligence?’ accessed on 24 Aug 2022 at https://www.europarl.europa.eu/RegData/etudes/ATAG/2021/690024/EPRS_ATA(2021)690024_EN.pdf.

[22] George Lakoff and Mark Johnson, The Metaphors We Live By (Chicago: University of Chicago Press, 2003).

[23] Elena Esposito, Artificial Communication: How Algorithms Produce Social Intelligence (Cambridge: The MIT Press, 2022), 5.

[24] Ibid.

[25] Morten H. Christiansen and Nick Charter, The Language Game: How Improvisation Created Language and Changed the World (London: Bantam Press, 2022), 15-23.